-

The Integration Debt Nobody Budgets For — And How VCF Eliminates It…

Optionality sounds powerful… until you have to operate it. This is not a debate about which hypervisor is fastest or which Kubernetes distribution has the most GitHub stars. It is a more fundamental question: what does it cost your organisation to assemble a platform versus deploying one? And as AI workloads enter the data centre, that question has never carried higher stakes. 🔷 1. The Illusion of Flexibility Modern infrastructure platforms arrive with a compelling pitch: The Pitch Choose your compute Pick your storage Define your networking Add Kubernetes Extend to AI later At first glance, this looks like…

-

Why VCF with VKS is a Stronger Enterprise Choice Than KubeVirt

Why VMware VKS Is a Stronger Enterprise Choice Than KubeVirt | vmtechie.blog KubeVirt is a capable open-source project and a legitimate choice in the right context. But when the workload is enterprise AI at scale — GPU clusters, production AI factories, regulated environments — the gap between VKS with VCF and KubeVirt is not a minor preference. It spans architecture, operations, governance, and enterprise transformation strategy. PREMISE Let’s Be Honest About KubeVirt First A technically credible argument never starts by dismissing the competition. KubeVirt is a real, production-used project with genuine strengths. Let’s acknowledge them honestly before making the…

-

Planning a VMware Cloud Foundation 9.0 Upgrade? Start Here…

vmtechie.blog · Infrastructure Tools I Built a VCF UpgradePath Planner — Here’s Why Tool: VCF Upgrade Path Planner Covers: 8 upgrade paths Target: VCF 9.0 / 9.0.2 If you’ve ever had to plan a VMware Cloud Foundation upgrade from scratch, you know how scattered the information can be — KB articles here, TechDocs pages there, blog posts from different release cycles, and no single place that ties it all together into a clear, ordered sequence. That frustration is exactly what drove me to build the VCF Upgrade Path Planner. As someone who works with VCF environments day-to-day and runs…

-

How the VCF 9 Fleet Sizer Actually Works

A complete walkthrough of every calculation behind the tool — from raw NVMe capacity to ESA protection factors, NVMe memory tiering, and VCF licence entitlement. No black boxes. Table of Contents 1. What the tool sizes The VCF 9 Fleet Sizer calculates the minimum number of ESXi hosts required across a VMware Cloud Foundation deployment — one Management Domain and any number of VI Workload Domains. For each domain it independently determines whether CPU, memory, or storage is the binding constraint, and returns the host count driven by the most demanding dimension. The sizer is built specifically for VCF…

-

VCF 9 Fleet Planning Sizer

After several VCF design sessions—navigating management domains, ESA policies, and the new core-based licensing—one thing became clear: we have plenty of docs, but we need more interactive clarity. I built the VCF 9 Fleet Planning Sizer (ESA Only) to help architects model environments quickly. 🔷 VCF 9 Fleet Planning Sizer (ESA Only) 👉 Try it here: https://sizer.vmtechie.blog/ This is an independent planning calculator designed to help architects model: Why I Built This Tool Designing VCF 9 isn’t just about adding up VMs. It’s about navigating the “Triple Constraint”: Compute, ESA Storage, and Licensing. In real architecture discussions, we constantly…

-

VCF 9 – Updating the Supervisor Service

Supervisor and VKS clusters are built using a common Kubernetes distribution core, but their Kubernetes versions are delivered differently. Starting with VCF 9, Supervisor Kubernetes releases are delivered independently of vCenter. You can update the Supervisor version by deploying a release from the Supervisor Content Library. In this blog post, we will walk through the Supervisor update process step by step. Let’s get started! Create and Configure a Subscribed Content Library for Supervisor Images For vSphere Supervisor, VMware publishes Supervisor images through a content delivery network (CDN). To enable or upgrade vSphere Supervisor, you can create a Subscribed Content…

-

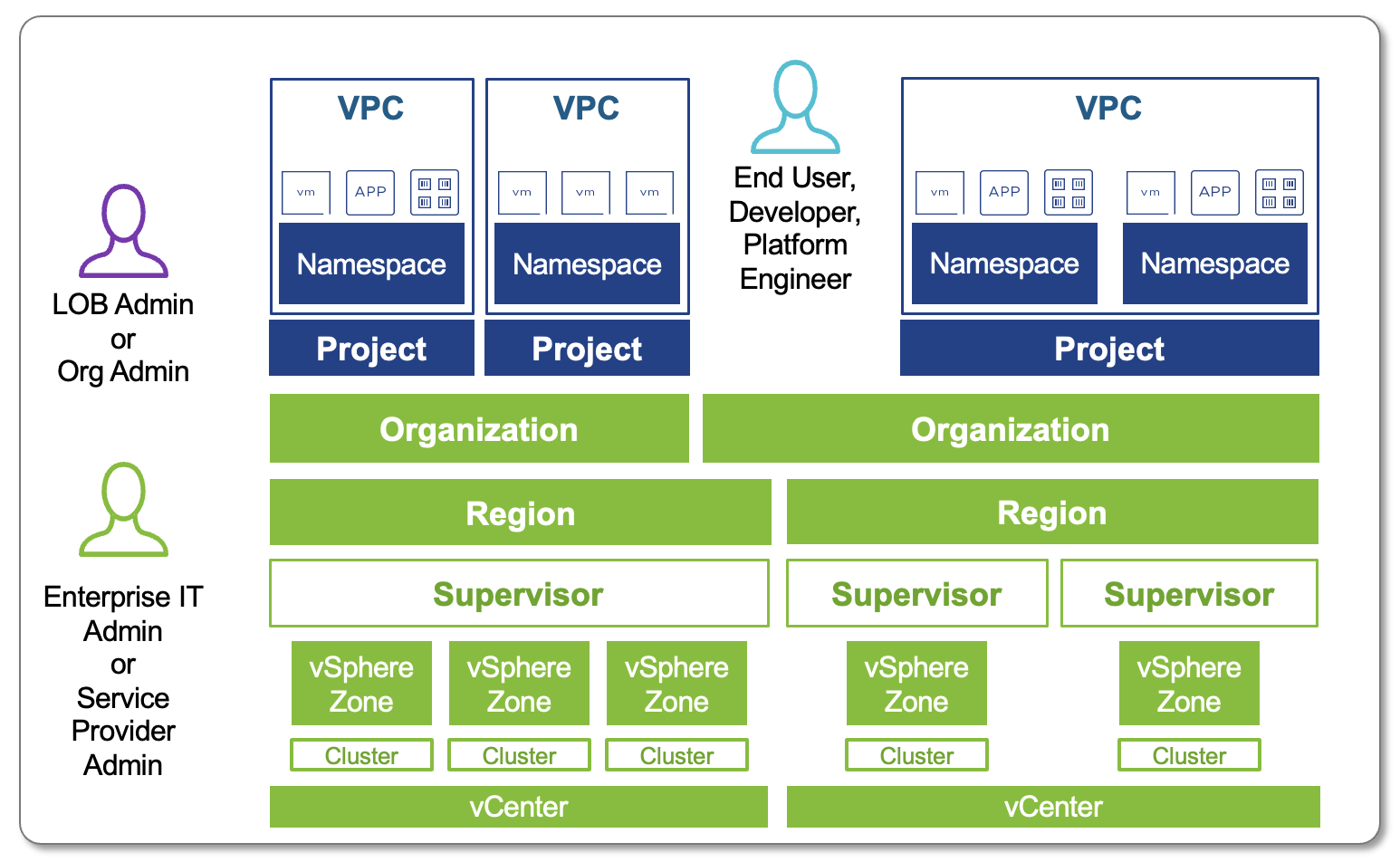

VCF Automation – Tenant Management

In today’s multi-tenant cloud environments, VMware Cloud Foundation Automation (VCFA) offers a robust layered architecture that seamlessly bridges enterprise-grade infrastructure management with developer-ready self-service capabilities. By clearly separating responsibilities—from VMware Cloud Service Providers who manage the physical and virtual infrastructure, to organization administrators who allocate resources, and finally to developers who consume them—VCFA enables efficient resource governance, operational consistency, and scalability. This structured approach not only supports multi-tenancy and workload isolation but also accelerates innovation by empowering end users to deploy applications and services quickly within well-defined boundaries. Why Tenant Management Matters? Tenant management is more than just dividing…

-

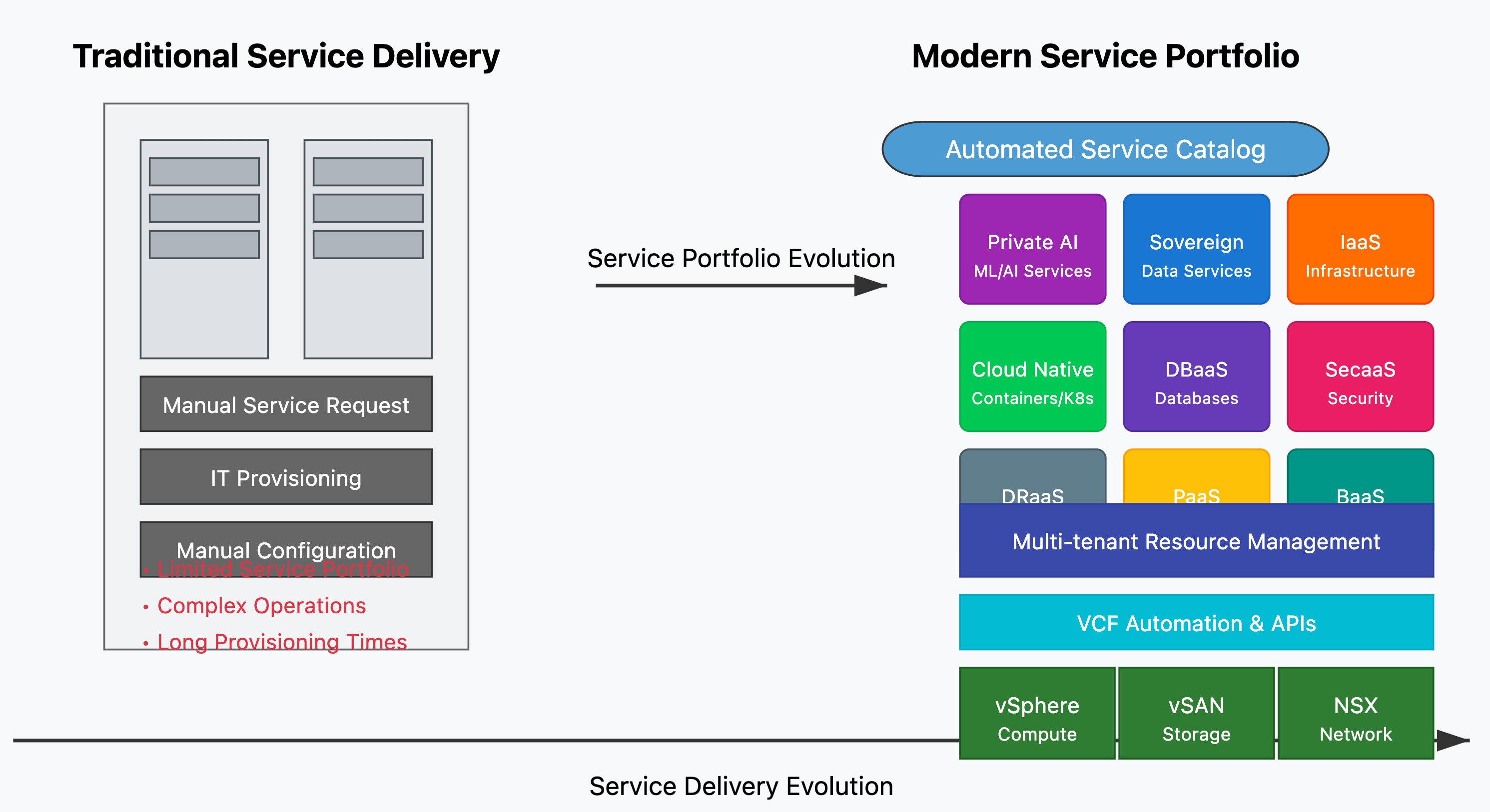

Navigating the Shift: From VMware Cloud Director to VCF Automation in VMware Cloud Foundation 9

VMware Cloud Foundation 9 (VCF 9) has officially launched, introducing a next-generation Cloud Management Platform — VCF Automation (VCFA). This new platform supersedes both Aria Automation and VMware Cloud Director (VCD). This blog is specifically aimed at those familiar with VCD and looking to understand how VCFA compares — what remains familiar, what’s changed, and how to navigate the shift. It’s important to note that VCFA is not a simple rebranding of existing tools. It is a new solution built with purpose, though it incorporates core components from its predecessors. The provider-facing layer, known as Tenant Manager, is built…

-

Integrating VMware Data Services Manager with VMware Cloud Director

In today’s rapidly evolving cloud landscape, integrating robust data management solutions is crucial for maintaining efficiency and scalability. VMware’s Data Services Manager (DSM) offers a comprehensive suite of tools to manage data services, and when integrated with VMware Cloud Director (VCD) and the Data Solutions Extension (DSE), it provides a powerful platform for cloud providers and their tenants. Integrating VMware DSM with VCD and DSE offers several advantages: Automation: Integration with VCD’s automation capabilities enables streamlined deployment and management of databases. Self-Service DBaaS: Tenants can easily provision and manage databases like MySQL, PostgreSQL, etc., without admin intervention. Centralized Management:…

-

Why Customers Should Choose VMware Cloud Service Providers When Transitioning from Public to Private Cloud

As businesses’ cloud strategies evolve, many are reconsidering their reliance on public cloud environments and exploring the benefits of private cloud solutions. Public clouds like AWS, Azure, and Google Cloud offer flexibility and scalability, but they also come with challenges such as unpredictable costs, security concerns, and limited control. This is where VMware Cloud Service Providers (CSPs), powered by VMware Cloud Foundation (VCF), present a compelling alternative for businesses looking to transition from public to private cloud. Here’s why customers should choose a VMware CSP when making this move: 1. Predictable Costs and Better Financial Control Public Cloud Challenge:The…

-

AI/ML with VMware Cloud Director

AI/ML—short for artificial intelligence (AI) and machine learning (ML)—represents an important evolution in computer science and data processing that is quickly transforming a vast array of industries. Why is AI/ML important? it’s no secret that data is an increasingly important business asset, with the amount of data generated and stored globally growing at an exponential rate. Of course, collecting data is pointless if you don’t do anything with it, but these enormous floods of data are simply unmanageable without automated systems to help. Artificial intelligence, machine learning and deep learning give organizations a way to extract value out of…

-

Code to Container with Tanzu Build Service

Tanzu Build Service uses the open-source Cloud Native Buildpacks project to turn application source code into container images. Build Service executes reproducible builds that align with modern container standards, and additionally keeps images up-to-date. It does so by leveraging Kubernetes infrastructure with kpack, a Cloud Native Buildpacks Platform, to orchestrate the image lifecycle. Tanzu Build Service helps customers develop and automate containerized software workflows securely and at scale. In this post Tanzu Build Service will monitor git branch and automatically build containers with every push. Then it will upload that container to your image registry for you to pull down and run locally…

-

Deploy Tanzu Kubernetes Clusters using Tanzu Mission Control

VMware Tanzu Mission Control is a centralized management platform for consistently operating and securing your Kubernetes infrastructure and modern applications across multiple teams and clouds. TMC is Available through VMware Cloud services, Tanzu Mission Control provides operators with a single control point to give developers the independence they need to drive business forward, while ensuring consistent management and operations across environments for increased security and governance. Use Tanzu Mission Control to manage your entire Kubernetes footprint, regardless of where your clusters reside. Getting Started with Tanzu Mission Control To get Started with Tanzu Mission Control, use VMware Cloud Services tools to gain access to VMware Tanzu…

-

Deploy Harbor Registry on TKG Clusters

Tanzu Kubernetes Grid Service, informally known as TKGS, lets you create and operate Tanzu Kubernetes clusters natively in vSphere with Tanzu. You use the Kubernetes CLI to invoke the Tanzu Kubernetes Grid Service and provision and manage Tanzu Kubernetes clusters. The Kubernetes clusters provisioned by the service are fully conformant, so you can deploy all types of Kubernetes workloads you would expect. vSphere with Tanzu leverages many reliable vSphere features to improve the Kubernetes experience, including vCenter SSO, the Content Library for Kubernetes software distributions, vSphere networking, vSphere storage, vSphere HA and DRS, and vSphere security. Harbor is an open…

-

Integrate Azure Files with Azure VMware Solution

Azure VMware Solution is a VMware validated solution with on-going validation and testing of enhancements and upgrades. Microsoft manages and maintains private cloud infrastructure and software. It allows customers to focus on developing and running workloads in your private clouds. In this blog post I will be configuring Virtual Machines running on VMware Azure Solution can access Azure files over azure private end point. This is a end to end four step process describe as below: and explained in this video: Here is Step-by-Step process of configuring and accessing Azure Files on Azure VMware Solution: Step -01 Deploy Azure…

-

Windows Bare Metal Servers on NSX-T overlay Networks

In this post, I will configure Windows 2016/2019 bare metal server as an transport node in NSX-T and then also will configure a NSX-T overlay segment on a Windows 2016/2019 server bare metal server, which allow VM and bare metal server on the same network to communicate. To use NSX-T Data Center on a windows physical server (Bare Metal server), let’s first understand few terminologies which we will use in this post. Application – represents the actual application running on the physical server server, such as a web server or a data base server. Application Interface – represents the network interface…

-

Cloud Native Runtimes for Tanzu

Dynamic Infrastructure This is an IT concept whereby underlying hardware and software can respond dynamically and more efficiently to changing levels of demand. Modern Cloud Infrastrastructure built on VM and Containers requires automated: Provisioning, Orchestration, Scheduling Service Configuration, Discovery and Registry Network Automation, Segmentation, Traffic Shaping and Observability What is Cloud Native Runtimes for Tanzu ? Cloud Native Runtimes for VMware Tanzu is a Kubernetes-based platform to deploy and manage modern Serverless workloads. Cloud Native Runtimes for Tanzu is based on Knative, and runs on a single Kubernetes cluster. Cloud Native Runtime automates all the aspects of dynamic Infrastructure…

-

Quick Tip – Delete Stale Entries on Cloud Director CSE

Container Service Extension (CSE) is a VMware vCloud Director (VCD) extension that helps tenants create and work with Kubernetes clusters.CSE brings Kubernetes as a Service to VCD, by creating customized VM templates (Kubernetes templates) and enabling tenant users to deploy fully functional Kubernetes clusters as self-contained vApps. Due to any reason, if tenant’s cluster creation stuck and it continue to show “CREATE:IN_PROGRESS” or “Creating” for many hours, it means that the cluster creation has failed for unknown reason, and the representing defined entity has not transitioned to the ERROR state . Solution To fix this, provider admin need to get in…

-

VMware Cloud Director Assignable Storage Policies to Entity Types

Service providers can use storage policies in VMware Cloud Director to create a tiered storage offering like: Gold, Silver and Bronze or even offer dedicated storage to tenants. With the enhancement of storage policies to support VMware Cloud Director entities, Now providers has the flexibility to control how tenant use the storage policies. Providers can have not only tiered storage, but isolated storage for running VMs, containers, edge gateways, Catalog and so on.A common use case that this Cloud Director 10.2.2 update addresses is the need for shared storage across clusters or offering lower cost storage for non-running workloads. For example, instead of having…

-

Auto Scale Applications with VMware Cloud Director

Starting with VMware Cloud Director 10.2.2, Tenants can auto scale applications depending on the current CPU and memory utilization. Depending on predefined criteria for the CPU and memory use, VMware Cloud Director can automatically scale up or down the number of VMs in a selected scale group. Cloud Director Scale Groups are a new top level object that tenants can use to implement automated horizontal scale-in and scale-out events on a group of workloads. You can configure auto scale groups with: A source vApp template A load balancer network A set of rules for growing or shrinking the group based on the CPU and memory…

-

vSphere Tanzu with AVI Load Balancer

With the release of the vSphere 7.0 Update 2, VMware now adds new Load Balancer option for vSphere with Tanzu which provides production-ready load balancer option for your vSphere with Tanzu deployments. This Load Balancer is called NSX Advanced Load Balancer, or NSX ALB or AVI Load Balancer, This will provide Virtual IP addresses for the Supervisor Control Plane API server, the TKG guest cluster API servers and any Kubernetes applications that require a service of type Load Balancer. In this post, I will go through a step-by-step deployment of the new NSX ALB along with vSphere with Tanzu. VLAN…

-

Tanzu Basic – Building TKG Cluster

In Continuation to our Tanzu Basic deployment series , this is the last part and by now we have our vSphere with Tanzu cluster enabled and deployed, now the next step would be to create Tanzu Kubernetes Clusters. In case if you missed previous posts , here they are: Getting Started with Tanzu Basic Tanzu Basic – Enable Workload Management Create a new namespace vSphere Namespaces is kind of a resource pool or a container that i can give to a project, team or customer a “Kubernetes+VM environment” where they can create and manage their application containers and virtual machines. They can’t…

-

Tanzu Basic – Enable Workload Management

In continuation to last post where we had deployed VMware HA proxy, now we will enable a vSphere cluster for Workload Management, by configuring it as a Supervisor Cluster. Part-1- Getting Started with Tanzu Basic – Part1 What is Workload Management With Workload Management we can deploy and operate the compute, networking, and storage infrastructure for vSphere with Kubernetes. vSphere with Kubernetes transforms vSphere to a platform for running Kubernetes workloads natively on the hypervisor layer. When enabled on a vSphere cluster, vSphere with Kubernetes provides the capability to run Kubernetes workloads directly on ESXi hosts and to create upstream Kubernetes clusters within dedicated resource pools…

-

Load Balancer as a Service with Cloud Director

NSX Advance Load Balancer’s (AVI) Intent-based Software Load Balancer provides scalable application delivery across any infrastructure. AVI provides 100% software load balancing to ensure a fast, scalable and secure application experience. It delivers elasticity and intelligence across any environments. It scales from 0 to 1 million SSL transactions per second in minutes. It achieves 90% faster provisioning and 50% lower TCO than traditional appliance-based approach. With the release of Cloud Director 10.2 , NSX ALB is natively integrated with Cloud Director to provider self service Load Balancing as a Service (LBaaS) where providers can release load balancing functionality to…

-

Configuring Ingress Controller on Tanzu Kubernetes Grid

Contour is an open source Kubernetes ingress controller providing the control plane for the Envoy edge and service proxy. Contour supports dynamic configuration updates and multi-team ingress delegation out of the box while maintaining a lightweight profile.In this blog post i will be deploying Ingress controller along with Load Balancer (LB was deployed in this post).you can also expose Envoy proxy as node port which will allow you to access your service on each k8s node. What is Ingress in Kubernetes “NodePort” and “LoadBalancer” let you expose a service by specifying that value in the service’s type. Ingress, on…

The Latest

-

Navigating the Shift: From VMware Cloud Director to VCF Automation in VMware Cloud Foundation 9

-

Integrating VMware Data Services Manager with VMware Cloud Director

-

The Integration Debt Nobody Budgets For — And How VCF Eliminates It…

-

How the VCF 9 Fleet Sizer Actually Works

-

VCF 9 Fleet Planning Sizer

-

VCF Automation – Tenant Management

-

Why Customers Should Choose VMware Cloud Service Providers When Transitioning from Public to Private Cloud

-

AI/ML with VMware Cloud Director

-

Windows Bare Metal Servers on NSX-T overlay Networks

-

Quick Tip – Delete Stale Entries on Cloud Director CSE

-

VMware Cloud Director Assignable Storage Policies to Entity Types

-

Auto Scale Applications with VMware Cloud Director

-

Tanzu Basic – Building TKG Cluster

-

Load Balancer as a Service with Cloud Director

-

From Virtualization to Cloud Service Delivery with VMware Cloud Foundation & VCSPs

-

Enhancing Firewall Flexibility in VMware Cloud Director 10.6.1