Dynamic Infrastructure

This is an IT concept whereby underlying hardware and software can respond dynamically and more efficiently to changing levels of demand. Modern Cloud Infrastrastructure built on VM and Containers requires automated:

- Provisioning, Orchestration, Scheduling

- Service Configuration, Discovery and Registry

- Network Automation, Segmentation, Traffic Shaping and Observability

What is Cloud Native Runtimes for Tanzu ?

Cloud Native Runtimes for VMware Tanzu is a Kubernetes-based platform to deploy and manage modern Serverless workloads. Cloud Native Runtimes for Tanzu is based on Knative, and runs on a single Kubernetes cluster. Cloud Native Runtime automates all the aspects of dynamic Infrastructure requirements.

Serverless ≠ FaaS

| Serverless | FaaS |

| Multi-Threaded (Server) | Cloud Provider Specific |

| Cloud Provider Agnostic | Single Threaded Functions |

| Long lived (days) | Shortly Lived (minutes) |

| offer more flexibility | Managing a large number of functions can be tricky |

Cloud Native Runtime Installation

Command line Tools Required For Cloud Native Runtime of Tanzu

The following command line tools are required to be downloaded and installed on a client workstation from which you will connect and manage Tanzu Kubernetes cluster and Tanzu Serverless.

kubectl (Version 1.18 or newer)

- Using a browser, navigate to the Kubernetes CLI Tools (available in vCenter Namespace) download URL for your environment.

- Select the operating system and download the

vsphere-plugin.zipfile. - Extract the contents of the ZIP file to a working directory.The vsphere-plugin.zip package contains two executable files: kubectl and vSphere Plugin for kubectl.

kubectlis the standard Kubernetes CLI.kubectl-vsphereis the vSphere Plugin for kubectl to help you authenticate with the Supervisor Cluster and Tanzu Kubernetes clusters using your vCenter Single Sign-On credentials. - Add the location of both executables to your system’s PATH variable.

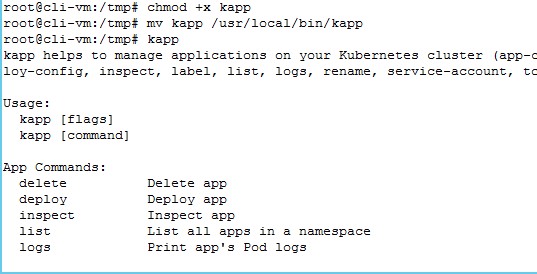

kapp (Version 0.34.0 or newer)

kapp is a lightweight application-centric tool for deploying resources on Kubernetes. Being both explicit and application-centric it provides an easier way to deploy and view all resources created together regardless of what namespace they’re in. Download and Install as below:

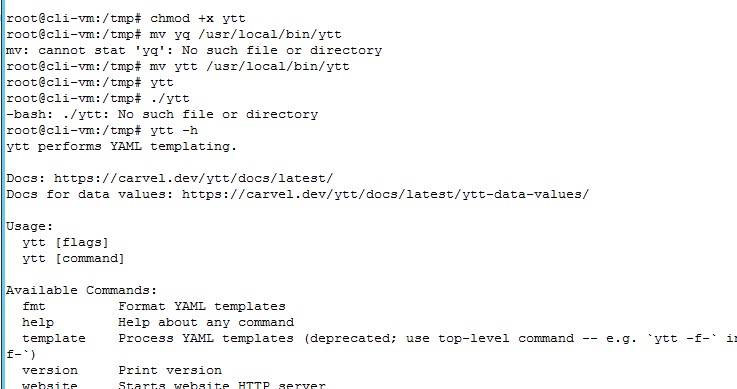

ytt (Version 0.30.0 or newer)

ytt is a templating tool that understands YAML structure. Download, Rename and Install as below:

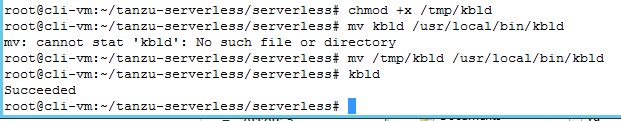

kbld (Version 0.28.0 or newer)

Orchestrates image builds (delegates to tools like Docker, pack, kubectl-buildkit) and registry pushes, works with local Docker daemon and remote registries, for development and production cases

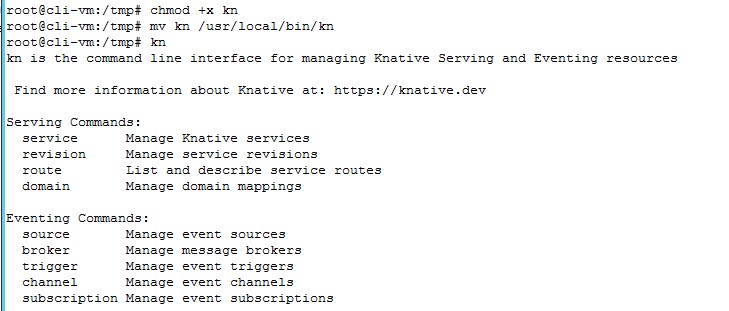

The Knative client kn is your door to the Knative world. It allows you to create Knative resources interactively from the command line or from within scripts. Download, Rename and Install as below:

Download Cloud Native Runtimes for Tanzu (Beta)

To install Cloud Native Runtimes for Tanzu, you must first download the installation package from VMware Tanzu Network:

- Log into VMware Tanzu Network.

- Navigate to the Cloud Native Runtimes for Tanzu release page.

- Download the serverless.tgz archive and release.lock

- Create a directory named tanzu-serverless.

- Extract the contents of serverless.tgz into your tanzu-serverless directory:

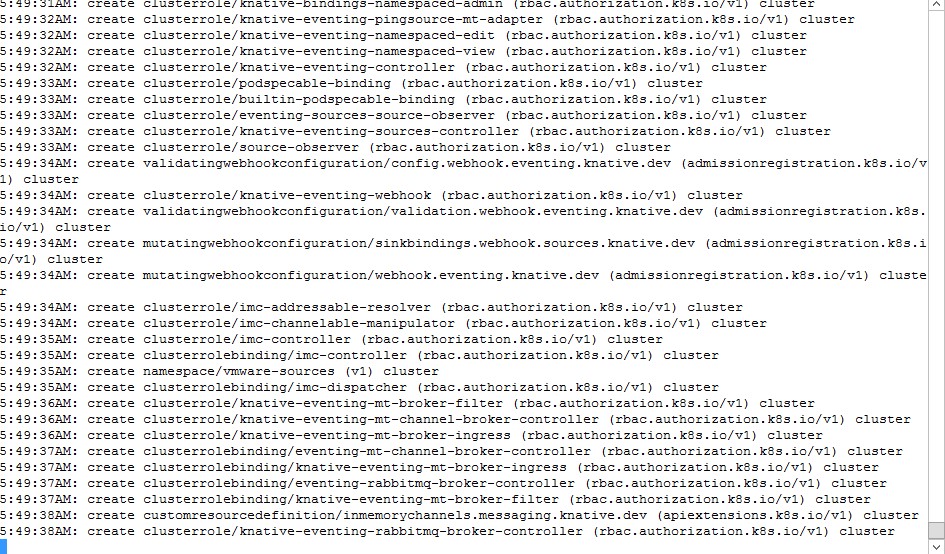

#tar xvf serverless.tar.gzInstall Cloud Native Runtimes for Tanzu on Tanzu Kubernetes Grid Cluster

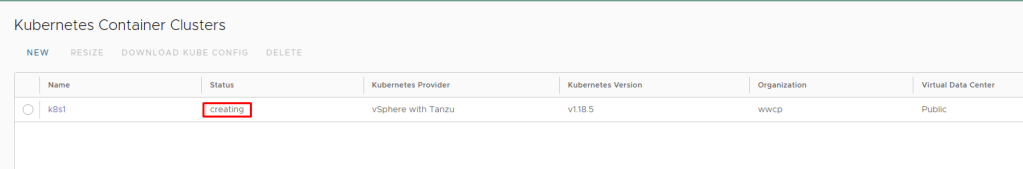

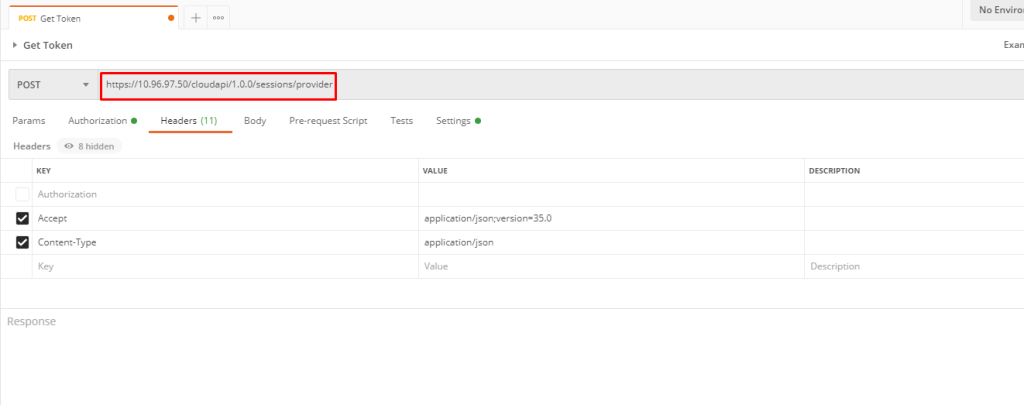

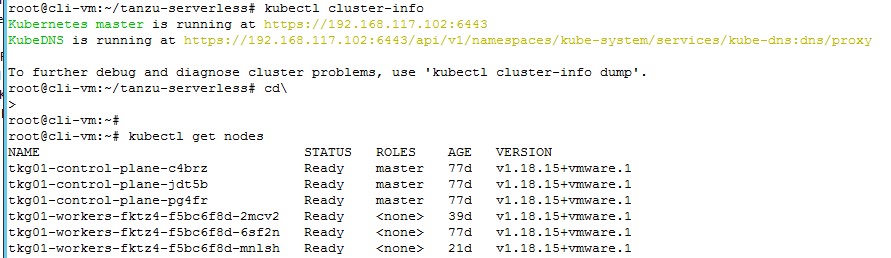

For this installation i am using a TKG cluster deployed on vSphere7 with Tanzu.To install Cloud Native Runtimes for Tanzu on Tanzu Kubernetes Grid: First target the cluster you want to use and verify that you are targeting the correct Kubernetes cluster by running:

#kubectl cluster-info

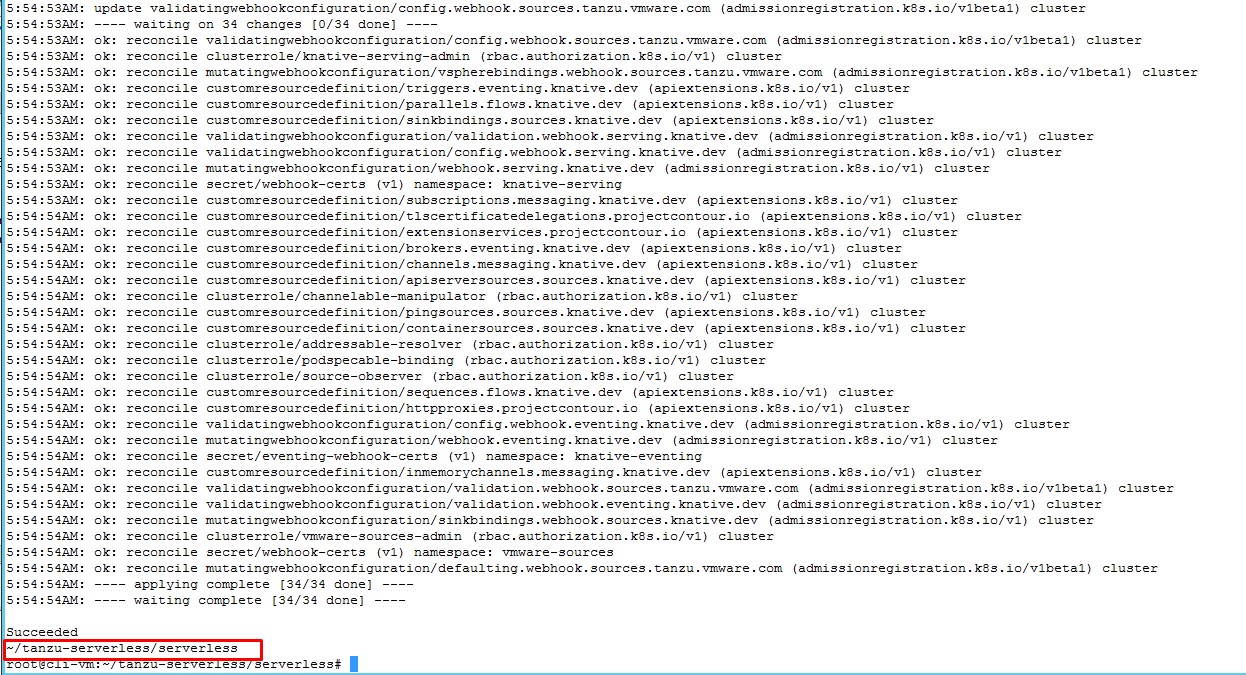

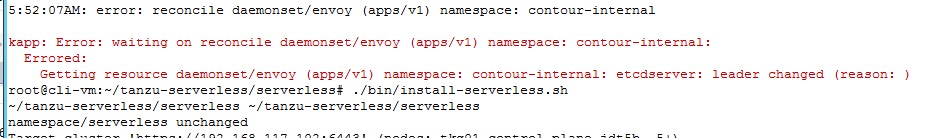

Run the installation script from the tanzu-serverless directory and wait for progress to get over

#./bin/install-serverless.shDuring my installation, I faced couple of issues like this..

i just rerun the installation, which automatically fixed these issues..

Verify Installation

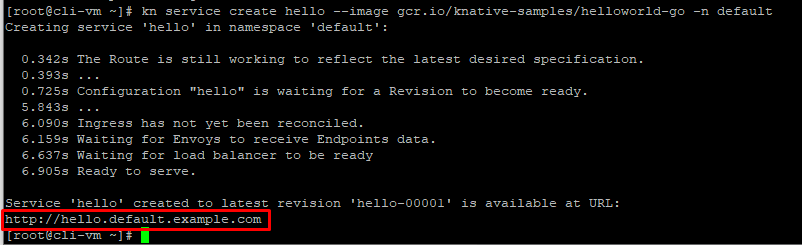

To verify that your serving installation was successful, create an example Knative service. For information about Knative example services, see Hello World – Go in the Knative documentation. let’s deploy a sample web application using the kn cli. Run:

#kn service create hello --image gcr.io/knative-samples/helloworld-go - default

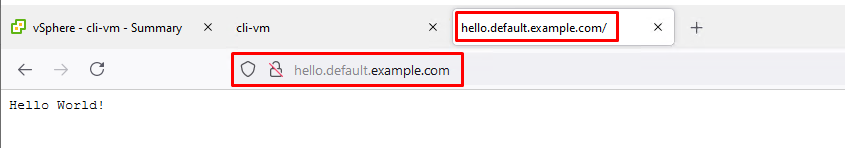

Take above external URL and either add Contour IP with host name in local hosts file or add an DNS entry and browse and if everything is done correctly your first application is running sucessfully.

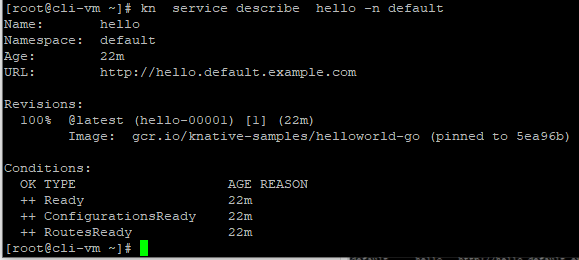

You can list and describe the service by running command:

#kn service list -A#kn service describe hello -n default

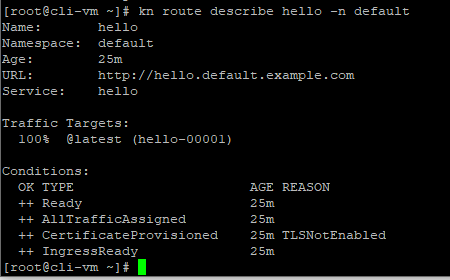

It looks like everything is up and ready as we configured it. Some other things you can do with the Knative CLI are to describe and list the routes with the app:

#kn route describe hello -n default

Create your own app

This demo used an existing Knative example, why not make our own app from an image, let do it using below yaml:

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: helloworld

namespace: default

spec:

template:

spec:

containers:

- image: gcr.io/knative-samples/helloworld-go

ports:

- containerPort: 8080

env:

- name: TARGET

value: "This is my app"

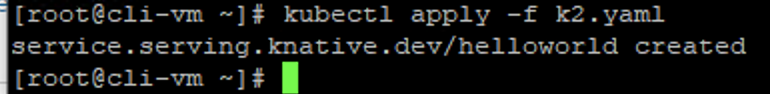

Save this to k2.yaml or something which you like, now lets deploy this new service using the kubectl apply command:

#kubectl apply -f k2.yaml

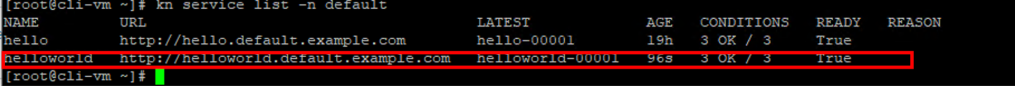

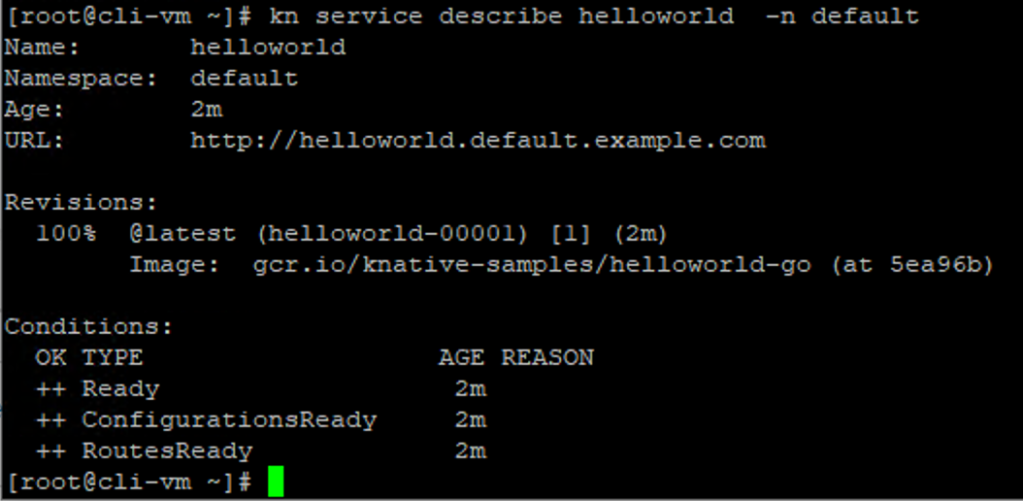

Next, we can list service and describe new deployment, as per the name provided in the YAML file:

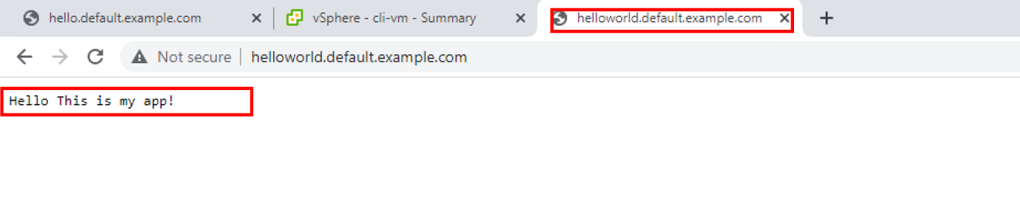

and now finally browse the URL by going to http://helloworld.default.example.com (you would need to add entry in DNS or hosts files)

This proves your application is running successfully, Cloud Native Runtimes for Tanzu is a great way for developers to move quickly go on serverless development with networking, autoscaling (even to zero), and revision tracking etc that allow users to see changes in apps immediately. GO ahead and try this in your Lab and once GA in production.